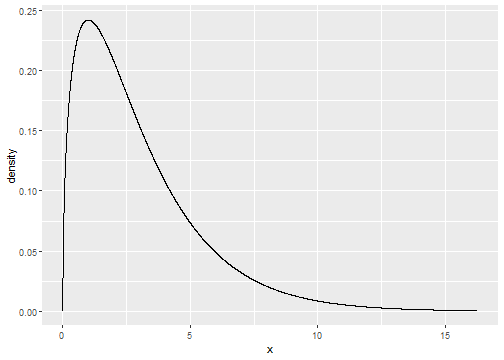

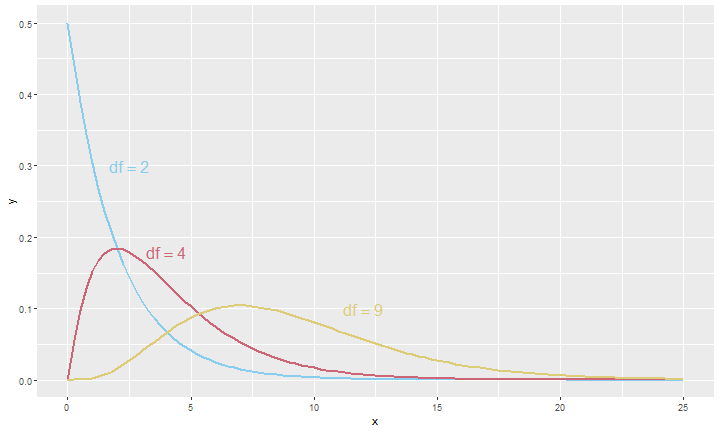

class: center, middle, inverse, title-slide # Lecture 11 ### JMG ### MATH 204 --- # Inference for Categorical Data In this lecture, we will examine two new inferential techniques: -- - Testing for goodness of fit using chi-square, which is applied to a categorical variable with more than two levels. This is commonly used in two circumstances: -- - Given a sample of cases that can be classified into several groups, determine if the sample is representative of the general population. -- - Evaluate whether data resemble a particular distribution, such as a normal distribution or a geometric distribution. -- - Testing for independence in two-way tables. --- # Learning Objectives - In this lecture, we cover some inferential techniques for categorical data. After this lecture you should be able to -- - Identify one-way and two-way table problems. -- - Work with a chi-square statistic and distribution. -- - Use the `chisq.test` function in R to conduct hypothesis tests. --- # Video on Goodness of Fit <div class="vembedr" align="center"> <div> <iframe src="https://www.youtube.com/embed/Uk36WGxujkc" width="533" height="300" frameborder="0" allowfullscreen=""></iframe> </div> </div> --- # Video on Two-Way Tables <div class="vembedr" align="center"> <div> <iframe src="https://www.youtube.com/embed/yjrsfNdja0U" width="533" height="300" frameborder="0" allowfullscreen=""></iframe> </div> </div> --- # Motivating Example Consider the following data: -- <table class="table" style="margin-left: auto; margin-right: auto;"> <thead> <tr> <th style="text-align:left;"> race </th> <th style="text-align:right;"> black </th> <th style="text-align:right;"> hispanic </th> <th style="text-align:right;"> white </th> <th style="text-align:right;"> other </th> <th style="text-align:right;"> total </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Representation in juries </td> <td style="text-align:right;"> 26.00 </td> <td style="text-align:right;"> 25.00 </td> <td style="text-align:right;"> 205.00 </td> <td style="text-align:right;"> 19.00 </td> <td style="text-align:right;"> 275 </td> </tr> <tr> <td style="text-align:left;"> Registered voters </td> <td style="text-align:right;"> 0.07 </td> <td style="text-align:right;"> 0.12 </td> <td style="text-align:right;"> 0.72 </td> <td style="text-align:right;"> 0.09 </td> <td style="text-align:right;"> 1 </td> </tr> </tbody> </table> -- We can compute the proportions for the data: ``` ## # A tibble: 4 x 3 ## race n p ## <fct> <int> <dbl> ## 1 black 26 0.0945 ## 2 hispanic 25 0.0909 ## 3 other 19 0.0691 ## 4 white 205 0.745 ``` -- - We would like to know if the jury is representative of the population. -- - This problem illustrates "Given a sample of cases that can be classified into several groups, determine if the sample is representative of the general population." --- # One-Way Tables - If we were to take the bottom row of the table on the last slide as the assumed true proportions, then we would expect to get the following so-called **one-way table**: <table class="table" style="margin-left: auto; margin-right: auto;"> <thead> <tr> <th style="text-align:left;"> race </th> <th style="text-align:right;"> black </th> <th style="text-align:right;"> hispanic </th> <th style="text-align:right;"> white </th> <th style="text-align:right;"> other </th> <th style="text-align:right;"> total </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Observed count </td> <td style="text-align:right;"> 26.00 </td> <td style="text-align:right;"> 25 </td> <td style="text-align:right;"> 205 </td> <td style="text-align:right;"> 19.00 </td> <td style="text-align:right;"> 275 </td> </tr> <tr> <td style="text-align:left;"> Expected count </td> <td style="text-align:right;"> 19.25 </td> <td style="text-align:right;"> 33 </td> <td style="text-align:right;"> 198 </td> <td style="text-align:right;"> 24.75 </td> <td style="text-align:right;"> 275 </td> </tr> </tbody> </table> -- - From a one-way table we can produce a test statistic that follows a well-known distribution. Specifically, we compute `$$\frac{(26-19.25)^2}{19.25} + \frac{(25-33)^2}{33} + \frac{(205-198)^2}{198} + \frac{(19-24.75)^2}{24.75}$$` -- - This value is ```r (26-19.25)^2/19.25 + (25-33)^2/33 + (205-198)^2/198 + (19-24.75)^2/24.75 ``` ``` ## [1] 5.88961 ``` --- # chi-Square Distributions - In order to conduct inferences on data corresponding to one-way tables, we need to use a new distribution function called a **chi-square distribution**. Such distributions are characterized by a parameter called degrees of freedom. Below we plot a chi-square density function with 3 degrees of freedom: ```r gf_dist("chisq",df=3) ``` <!-- --> --- # chi-Square for Different df's <!-- --> --- # chi-Square Test Conditions - In order to use a chi-square distribution to compute a p-value, we need to check two conditions: -- - Independence. Each case that contributes a count to the table must be independent of all the other cases in the table. -- - Sample size / distribution. Each particular scenario must have at least 5 expected cases. --- # chi-Square Test for One-Way Table Suppose we are to evaluate whether there is convincing evidence that a set of observed counts `\(O_{1}\)`, `\(O_{2}\)`, `\(\ldots\)`, `\(O_{k}\)` in `\(k\)` categories are unusually different from what we might expect under a null hypothesis. Denote the *expected counts* that are based on the null hypothesis by `\(E_{1}\)`, `\(E_{2}\)`, `\(\ldots\)`, `\(E_{k}\)`. If each expected count is at least 5 and the null hypothesis is true, then the test statistic `$$X^{2} = \frac{(O_{1}-E_{1})^2}{E_{1}} + \frac{(O_{2}-E_{2})^2}{E_{2}} + \cdots + \frac{(O_{k}-E_{k})^2}{E_{k}}$$` follows a chi-square distribution with `\(k-1\)` degrees of freedom. Note that this test statistic is always positive. -- The p-value for this test statistic is found by looking at the upper tail of this chi-square distribution. We consider the upper tail because larger values of `\(X^2\)` would provide greater evidence against the null hypothesis. --- # Example chi-Square Test - We expect the statistic for the one-way table for the jury data to follow a chi-square distribution with 3 degrees of freedom since there are `\(k=4\)` categories. -- - Then the probability of observing a value that is as or more extreme that the value obtained from the sample data is ```r 1 - pchisq(5.89,3) ``` ``` ## [1] 0.1170863 ``` -- - Alternatively: ```r pchisq(5.89,3,lower.tail = FALSE) ``` ``` ## [1] 0.1170863 ``` -- - We just computed a p-value, but to what null hypothesis does this p-value provide an appropriate means of testing? --- # Null Hypothesis for One-Way Tables - In our example, we would want to test: -- - `\(H_{0}:\)` The jurors are a random sample, that is, there is no racial bias in who serves on a jury, and the observed counts reflect natural sampling fluctuation. -- - `\(H_{A}:\)` The jurors are not randomly sampled, that is, there is racial bias in juror selection. -- - Using the data, we can conduct the chi-square test as follows: ```r chisq.test(c(26,25,205,19),p=c(0.07,0.12,0.72,0.09)) ``` ``` ## ## Chi-squared test for given probabilities ## ## data: c(26, 25, 205, 19) ## X-squared = 5.8896, df = 3, p-value = 0.1171 ``` --- # More Examples - Let's see some more examples. -- - Let's look at exercise 6.33 from the textbook on page 239. The table for the data will look as follows: <table class="table" style="margin-left: auto; margin-right: auto;"> <thead> <tr> <th style="text-align:left;"> textbook </th> <th style="text-align:right;"> purchased </th> <th style="text-align:right;"> printed </th> <th style="text-align:right;"> online </th> <th style="text-align:right;"> total </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Method </td> <td style="text-align:right;"> 71.0 </td> <td style="text-align:right;"> 30.00 </td> <td style="text-align:right;"> 25.00 </td> <td style="text-align:right;"> 126 </td> </tr> <tr> <td style="text-align:left;"> Expected percent </td> <td style="text-align:right;"> 0.6 </td> <td style="text-align:right;"> 0.25 </td> <td style="text-align:right;"> 0.15 </td> <td style="text-align:right;"> 1 </td> </tr> </tbody> </table> -- - The expected counts will be ``` ## purchased printed online ## 75.6 31.5 18.9 ``` -- - Thus, our `\(X^2\)` statistic is ```r (X2 <- (71-75.6)^2/75.6 + (30-31.5)^2/31.5 + (25-18.9)^2/18.9) ``` ``` ## [1] 2.320106 ``` --- # Example Continued - The appropriate degrees of freedom is 2. Therefore, the tail area is ```r pchisq(X2,2,lower.tail = FALSE) ``` ``` ## [1] 0.3134696 ``` -- - If our hypothesis is: `\(H_{0}:\)` the distribution of the format of the book used follows the expected distribution, vs. `\(H_{A}:\)` the distribution of the format of the book used does not follow the expected distribution, then we will fail to reject the null hypothesis at the `\(\alpha = 0.05\)` level of significance. -- - We can confirm our result using R: ```r chisq.test(c(71,30,25),p=c(0.6,0.25,0.15)) ``` ``` ## ## Chi-squared test for given probabilities ## ## data: c(71, 30, 25) ## X-squared = 2.3201, df = 2, p-value = 0.3135 ``` --- # Two-Way Tables - A one-way table describes counts for each outcome in a single categorical variable. -- - A two-way table describes counts for combinations of two categorical variables where at least one of the two has more than 2 levels. -- - When we consider a two-way table, we often would like to know, are these variables related in any way? That is, are they dependent versus independent? --- # Null Hypothesis for Two-Way Tables - For a two-way table problem, the typical hypotheses are of the form -- - `\(H_{0}:\)` The two variables are independent. -- - `\(H_{A}:\)` The two variables are dependent. -- - Let's look at an example. --- # Offshore Drilling Example - Consider the data with first few rows shown below: ``` ## # A tibble: 6 x 2 ## position college_grad ## <fct> <fct> ## 1 support yes ## 2 support yes ## 3 support yes ## 4 support yes ## 5 support yes ## 6 support yes ``` -- - Let's look at the corresponding two-way table: ```r addmargins(table(my_offshore_drilling$position,my_offshore_drilling$college_grad)) ``` ``` ## ## no yes Sum ## do_not_know 131 104 235 ## oppose 126 180 306 ## support 132 154 286 ## Sum 389 438 827 ``` --- # Hypothesis Test for Offshore Drilling - We would like to test the hypothesis: -- - `\(H_{0}:\)` College graduate status and support for offshore drilling are independent. -- - `\(H_{A}:\)` College graduate status and support for offshore drilling are not independent. -- - This is easily done with ```r chisq.test(my_offshore_drilling$position,my_offshore_drilling$college_grad) ``` ``` ## ## Pearson's Chi-squared test ## ## data: my_offshore_drilling$position and my_offshore_drilling$college_grad ## X-squared = 11.461, df = 2, p-value = 0.003246 ``` -- - Thus, we will reject the null hypothesis at the `\(\alpha=0.05\)` significance level. --- # Tests By Hand - Let's see how to conduct the previous test by hand. -- - The two things we need to know are the value of the `\(X^2\)` statistic and the appropriate number of degrees of freedom to use. -- - When applying the chi-square test to a two-way table, we use `$$df = (R-1) \times (C-1)$$` where `\(R\)` is the number of rows in the table and `\(C\)` is the number of columns. -- - Thus, in our example, `\(df = (3-1)\times (2-1) = 2\)`. --- # Computing `\(X^2\)` - As before `$$X^2 = \sum \frac{(O-E)^2}{E}$$` -- - The question is, how do we compute the expected counts ( `\(E_{ij}\)` ) for a two-way table? The answer is `$$\text{Expected Count}_{\text{row }i \text{ col }j} = E_{ij} = \frac{\text{row }i \text{ total} \times \text{column }j \text{ total}}{\text{table total}}$$` -- - Let's work this out on the board for our example. --- # Hypothesis Testing Summary - To date, we have covered the following tests: -- - Single proportion and difference of proportions using a "z-test." -- - Single mean, paired mean, difference of means using a "t-test." -- - Comparing many means with ANOVA. -- - One-way and two-way table tests for categorical variables with chi-square. -- - Simple linear regression via ordinary least squares for a pair of numeric variable. -- - We also know how to construct confidence intervals for parameter estimates for proportions, difference of proportions, mean, difference of means, and intercept and slope parameters for a linear model. --- # Next Topic - Our next topic discusses more regarding regression. This video will get you started: <div class="vembedr" align="center"> <div> <iframe src="https://www.youtube.com/embed/sQpAuyfEYZg" width="533" height="300" frameborder="0" allowfullscreen=""></iframe> </div> </div>