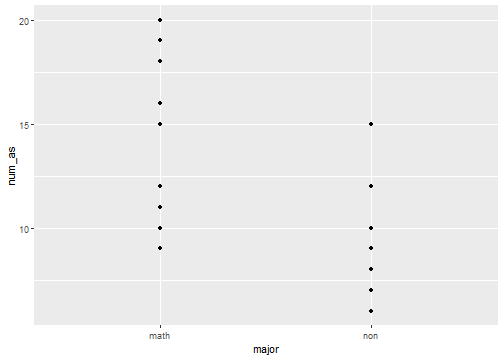

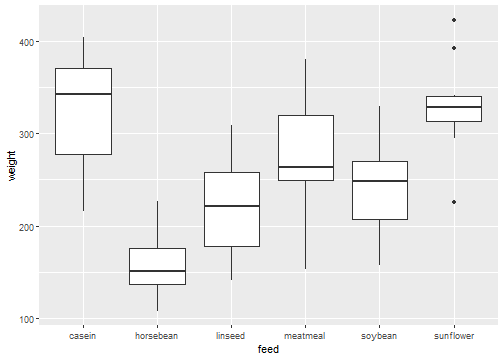

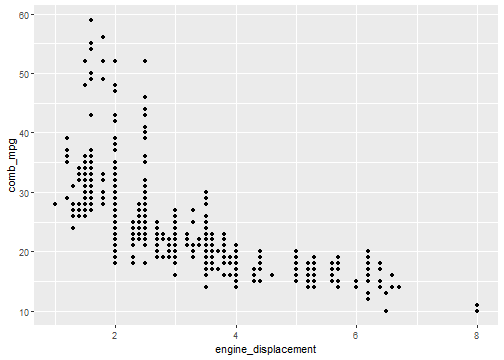

class: center, middle, inverse, title-slide .title[ # Lecture 13 ] .author[ ### JMG ] .institute[ ### MATH 204 ] --- # Intro to Nonparametric Stats - All of the inferential methods we have studied so far rely on certain distributional assumptions. For example, in many cases we have either assumed or required data to be normally distributed. -- - These distributional assumptions relate to probability distributions, *e.g.*, normal, that depend on parameters, *e.g.*, `\(\mu\)` and `\(\sigma\)` for a normal distribution. -- - What do we do if the necessary distributional assumptions or requirements can not be met? -- - In some cases, there is a [**nonparametric**](https://en.wikipedia.org/wiki/Nonparametric_statistics) parallel to the inferential methods we have studied so far that allow us to weaken the conditions, say for example to conduct a hypothesis test. -- - Roughly speaking, a nonparametric test is a hypothesis test that does not require any specific conditions concerning the shape of populations or the value of any population parameters. -- - We cover some of these nonparametric statistics in this lecture. Note that this material is not covered in our textbook [OpenIntro Statistics](https://openintro.org/book/os/). --- # Parametric Vs. Nonparametric - Below we list some inferential methods we have already studied together with their parallel nonparametric test. -- - t-test for difference of means; [Wilcoxon rank-sum test](https://en.wikipedia.org/wiki/Mann%E2%80%93Whitney_U_test) (or Mann-Whitney-Wilcoxon test) -- - paired t-test; [Wilcoxon signed-rank test](https://en.wikipedia.org/wiki/Wilcoxon_signed-rank_test) -- - ANOVA (F-test); [Kruskal-Wallis test](https://en.wikipedia.org/wiki/Kruskal%E2%80%93Wallis_one-way_analysis_of_variance). -- - We also look at a [sign test](https://en.wikipedia.org/wiki/Sign_test) where we are interested in assessing differences between to groups of paired observations but do not taken into account magnitudes of differences. --- # Learning Objectives - After this lecture, you should -- - have a sense of how the rank-sum, signed-rank, Kruskal-Wallis, and sign tests work, -- - have a sense of when to use the rank-sum, signed-rank, Kruskal-Wallis, and sign tests -- - know how to conduct these tests using R. --- # Why Use Nonparametric Stats? - In general, conclusions drawn from non-parametric methods are not as powerful as the parametric ones. -- - However, as nonparametric methods make fewer assumptions, they are more flexible, more robust, and easier to run. -- - Nonparametric methods are especially useful when sample sizes are small. -- - [Nonparametric statistics](https://en.wikipedia.org/wiki/Nonparametric_statistics) is a significant and substantial subfield of statistics. However, it should be noted that it is difficult to give a simple and precise definition of nonparametric statistics. -- - What we cover in this lecture does not even begin to touch upon many of the most important parts of nonparametric inference. --- # Rank-sum Test - We begin our introduction to nonparametric methods with a discussion of the Wilcoxon rank-sum test which is also known as the Mann–Whitney U test. -- - Suppose we have the following question: > Do math majors get more A's than non-math majors? -- - To answer this question, we collect data. For example: ``` ## # A tibble: 6 × 2 ## major num_as ## <chr> <dbl> ## 1 non 8 ## 2 math 12 ## 3 non 12 ## 4 math 19 ## 5 non 10 ## 6 non 9 ``` -- - Note that there are 10 math majors and 13 non-math majors: ``` ## ## math non ## 10 13 ``` --- # Plot of Data - Here is a plot of our data: <!-- --> --- # Assumptions - In the previous data set, there is no reason to expect that the number of A's is normally distributed. Furthermore, the sample sizes are small. -- - The Wilcoxon rank-sum test only requires us to assume that -- - All of the observations are independent and the responses are at least ordinal in that we can at least say whether of any two observations, which is greater. -- - Rank-sum tests -- - `\(H_{0}:\)` The distributions of both populations are equal. -- - `\(H_{A}:\)` The distributions of the populations are not equal. --- # Example Rank-Sum Test - In R, we conduct a rank-sum test using ```r wilcox.test(num_as~major,data=df_1,correct=FALSE) ``` ``` ## ## Wilcoxon rank sum test ## ## data: num_as by major ## W = 114, p-value = 0.002179 ## alternative hypothesis: true location shift is not equal to 0 ``` -- - If our significance level is `\(\alpha=0.05\)`, then we would reject the null hypothesis that the distribution of the number of A's is the same for the two groups. --- # Signed-rank Test - Now we consider the Wilcoxon signed-rank test. -- - Suppose we have the following question: > Do you work out longer if you listen to music while exercising? -- - We randomly select 10 students, and record their workout time in minutes for one workout session without music, and for a second workout session with music. The data might look like follows: ```r (no_music <- c(30,25,20,22,36,40,35,27,30,35)) ``` ``` ## [1] 30 25 20 22 36 40 35 27 30 35 ``` ```r (with_music <- c(32,27,20,25,30,39,30,30,28,32)) ``` ``` ## [1] 32 27 20 25 30 39 30 30 28 32 ``` -- - It is not reasonable to assume that this data is normally distributed. --- # Signed-Rank Test - We want to test the hypothesis: -- - `\(H_{0}:\)` There is no difference in the length of workout sessions. -- - `\(H_{A}:\)` There is a difference in the length of workout sessions. -- - The signed-rank test can be used for this test. --- # Example Signed-Rank Test - In R, we conduct a signed-rank test using ```r wilcox.test(no_music,with_music,paired=TRUE,correct=FALSE) ``` ``` ## ## Wilcoxon signed rank test ## ## data: no_music and with_music ## V = 27, p-value = 0.5913 ## alternative hypothesis: true location shift is not equal to 0 ``` -- - We fail to reject the null hypothesis at the `\(\alpha=0.05\)` significance level. --- # Kruskal-Wallis - The Kruskal-Wallis test parallels our ANOVA test for comparing many means. -- - The null and alternative hypotheses for the Kruskal-Wallis test are -- - `\(H_{0}:\)` There is no difference in the distribution of the populations. -- - `\(H_{A}:\)` There is a difference in the distributions of the populations. -- - Two conditions for using the Kruskal-Wallis test are -- - each sample must be randomly selected, and -- - the size of each sample must be at least 5. --- # Example - Consider again out data for the weights of chicken fed with different types of feed: <!-- --> --- # Kruskal-Wallis Test - Let's run a Kruskal-Wallis test on the chicken weights data: ```r kruskal.test(weight~feed,data=chickwts) ``` ``` ## ## Kruskal-Wallis rank sum test ## ## data: weight by feed ## Kruskal-Wallis chi-squared = 37.343, df = 5, p-value = 5.113e-07 ``` -- - If our significance level is `\(\alpha=0.05\)`, then we will decide to reject the null hypothesis that the distribution of weights is the same across all groups. -- - Let's compare the results from Kruskal-Wallis with results from an ANOVA test. --- # Comparing Tests - ANOVA ```r summary(aov(weight~feed,data=chickwts)) ``` ``` ## Df Sum Sq Mean Sq F value Pr(>F) ## feed 5 231129 46226 15.37 5.94e-10 *** ## Residuals 65 195556 3009 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` -- - Kruskal-Wallis ```r kruskal.test(weight~feed,data=chickwts) ``` ``` ## ## Kruskal-Wallis rank sum test ## ## data: weight by feed ## Kruskal-Wallis chi-squared = 37.343, df = 5, p-value = 5.113e-07 ``` --- # Sign Test - The sign test is a nonparametric test that can be used to test a population median against a hypothesized value `\(k\)`. -- - Suppose we have the following data: ```r x <- c(4.8,4.0,3.8,4.3,3.9,4.6,3.1,3.7) ``` -- - As an example, suppose we want to test the hypothesis -- - `\(H_{0}:\)` This data comes from a distribution with median 3.55, versus -- - `\(H_{A}:\)` The data comes from a distribution with median not equal to 3.55. -- - To proceed, we compute the sign of the difference between each data value and 3.55: ```r sign(x-3.55) ``` ``` ## [1] 1 1 1 1 1 1 -1 1 ``` --- # Sign Test Example - From the data, we see that 7 out of 8 of the observations have a value that is greater than the hypothesized median. -- - If the null hypothesis were true, that is, if the population median were 3.55 then we expect that half of the population would have a value less than the median and half would have a value greater than the median. -- - So what is the probability of getting 7 out of 8 positive values when the probability of getting a positive value is the same as getting a negative value. -- - Observe that we have reduced our problem to a situation where we expect under the null hypothesis the outcomes to follow a binomial distribution with probability of success equal to 0.5 -- - Thus, the probability of observing an outcome that is as or more extreme than getting 7 of 8 positive values under the null hypothesis is ```r pbinom(1,8,0.5) + (1 - pbinom(6,8,0.5)) ``` ``` ## [1] 0.0703125 ``` --- # Example Sign Test Conclusion - We conclude that we will fail to reject the null hypothesis at the `\(\alpha =0.05\)` significance level because our p-value is 0.07. -- - Note that we can conduct this test in R using the `binom.test` function: ```r binom.test(7,8,0.5) ``` ``` ## ## Exact binomial test ## ## data: 7 and 8 ## number of successes = 7, number of trials = 8, p-value = 0.07031 ## alternative hypothesis: true probability of success is not equal to 0.5 ## 95 percent confidence interval: ## 0.4734903 0.9968403 ## sample estimates: ## probability of success ## 0.875 ``` --- # Paired Sign Test - We can also conduct a paired version of the sign test. -- - Consider again our music/workout data: ``` ## [1] 30 25 20 22 36 40 35 27 30 35 ``` ``` ## [1] 32 27 20 25 30 39 30 30 28 32 ``` -- - Let's compute the sign of the difference for each data value: ```r sign(no_music-with_music) ``` ``` ## [1] -1 -1 0 -1 1 1 1 -1 1 1 ``` -- - Of the 9 nonzero values, 5 are positive. Under the null hypothesis we would expect the positives and negatives to be equally likely. --- # Paired Sign Test Cont. Thus we test the hypothesis using ```r binom.test(5,9,0.5) ``` ``` ## ## Exact binomial test ## ## data: 5 and 9 ## number of successes = 5, number of trials = 9, p-value = 1 ## alternative hypothesis: true probability of success is not equal to 0.5 ## 95 percent confidence interval: ## 0.2120085 0.8630043 ## sample estimates: ## probability of success ## 0.5555556 ``` -- - Thus, just as when we used the sign-rank test we fail to reject the null hypothesis at the `\(\alpha = 0.05\)` significance level. --- # Considerations - Generally when would you want to use a nonparametric test? -- - It is not improper to try both a parametric test and nonparametric test and compare the results. -- - Obviously if the conditions for a parametric test are not met, then you shouldn't use it. -- - If the results of a parametric test and a nonparametric test lead to different conclusions then you should take into account which test has higher power. If a test is underpowered then any conclusions drawn are unreliable. -- - Critical thinking is essential to a successful application of **any** statistical method. -- - The biggest problem with the nonparametric methods we have introduced is the absence of a dual discussion of confidence intervals as we did when covering parametric methods. We note that there are nonparametric methods for estimating confidence intervals, notably [bootstrapping](https://en.wikipedia.org/wiki/Bootstrapping_(statistics)). --- # A Bonus Nonparametric Method - As on additional nonparametric statistic, we briefly introduce the Spearman rank correlation coefficient. -- - The correlation coefficient that we have denoted by `\(R\)` measures the strength and direction of **linear** association between two variables. -- - However, tow variables may have a strong association without the association being strictly linear. -- - Let's look at an example. --- # Nonlinear Association Example - Recall the `epa2021` data we have considered before and let's examine the association between the `engine_displacement` and `comb_mpg` variables. <!-- --> ``` ## [1] "R = -0.703" ``` --- # Spearman - For the previous data the correlation coefficient "misses" the nonlinear association of the data. Instead, we try a nonparametric correlation statistic called Spearman's correlation. This is obtain in R using ```r cor(epa2021$engine_displacement,epa2021$comb_mpg,method="spearman") ``` ``` ## [1] -0.8399713 ``` -- - The interpretation here is that the association between the two variables in our data is stronger than the correlation coefficient suggests because the association is nonlinear as opposed to strictly linear. --- # Nonparametric Tests Video - For further perspective on nonparametric stats, we recommend this video <div class="vembedr" align="center"> <div> <iframe src="https://www.youtube.com/embed/IcLSKko2tsg" width="533" height="300" frameborder="0" allowfullscreen="" data-external="1"></iframe> </div> </div>